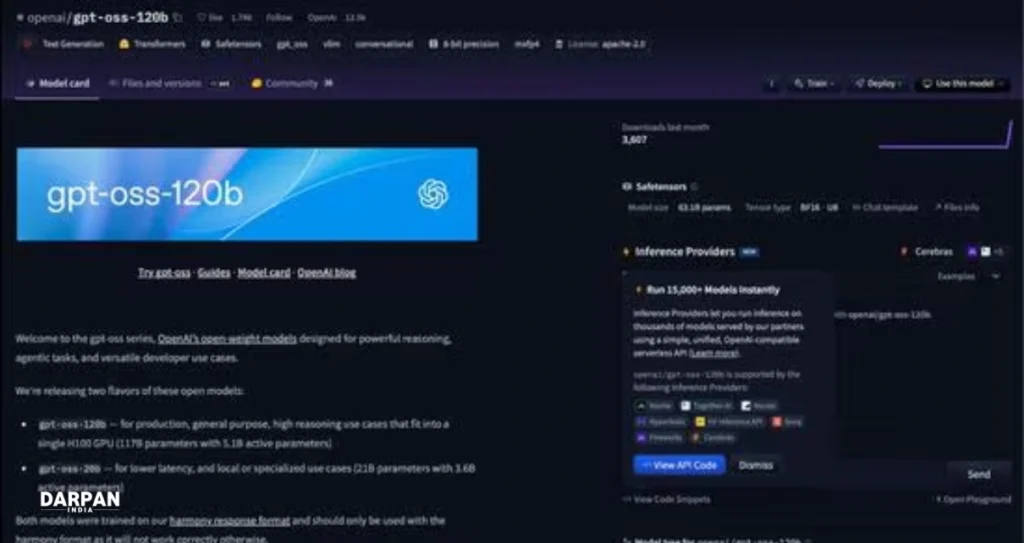

In a major shift back to its founding principles, artificial intelligence pioneer OpenAI has unveiled two advanced open-weight language models — GPT-OSS-120B and GPT-OSS-20B. Dubbed “reasoning models,” these AI tools promise superior performance in complex problem-solving and mark OpenAI’s renewed commitment to democratizing AI technology.

Open vs. Closed: What’s the Big Deal?

Unlike popular closed models like GPT-4, Google Gemini, or Claude — where only the output is accessible — open-weight models release their full “brain” (called weights or parameters) publicly. Users can download, run, and tweak them freely on their own hardware. This puts OpenAI in league with Meta’s Llama and France’s Mistral, which also embrace openness.

⚠️ Note: Open-weight ≠ Open-source. True open-source models (like DeepSeek-R1) share training code and datasets — OpenAI hasn’t gone that far yet.

Why “Reasoning Models”?

These models are engineered to “think deeper” before answering. They tackle multi-step problems — like math puzzles or logical analysis — with enhanced precision, making them ideal for research, coding, and technical innovation.

Also Read

Model Showdown

- GPT-OSS-120B: 117 billion parameters, needs 80GB RAM

- GPT-OSS-20B: 21 billion parameters, needs 16GB RAM

In benchmark tests like MMLU (college-level knowledge), they held their own:

- GPT-OSS-120B: 90% accuracy

- GPT-OSS-20B: 85.3% accuracy

*(Top rivals: o4-mini: 93%, o3-mini: 87%)*

Microsoft Steps In

To boost accessibility, Microsoft will soon roll out GPU-optimized versions of GPT-OSS-20B for Windows PCs, easing local deployment.

OpenAI’s Mission “Homecoming”

Facing years of criticism for straying from its “open” ethos, OpenAI’s move is strategic. As the company stated:

“These models accelerate research, foster safer AI development, and give developers flexible tools to innovate.”

This launch signals a course correction — blending cutting-edge tech with OpenAI’s original promise: AI for all.